The State of AI Native - Telephony

The infrastructure layer is still catching up. Here's why that matters.

This is the first in a series on what AI-native actually means, built from years inside the infrastructure — not observing it from outside. Each post goes deep on one segment. A consolidated piece follows once the series is complete.

There is a version of the AI-native telephony story that is easy to tell. Voice AI is here. Conversational agents are replacing call centre workers. The phone call, once a human-to-human interaction, is becoming a human-to-machine one. Companies are automating outreach, collections, and support. The market is moving fast. The technology is impressive.

TL;DR — The AI-native telephony story is more complicated than the market suggests. The ideas being built today were imagined a decade ago inside companies like Exotel — they were shelved because the models didn’t exist yet, not because the vision was wrong. The intelligence layer has finally caught up. The infrastructure layer hasn’t. 95% of AI voice pilots are failing. Less than 1% of contact centres have autonomous agents in production. The new builders understand the models but keep hitting the same walls — concurrency, latency, physical circuit limits, and in India, 22 languages and a regulatory framework most platforms discover after they’re already in production. For Indian incumbents specifically, there is a 24–36 month window to capture the multilingual voice AI market before global platforms close the gap. And the per-minute pricing model that built the CPaaS industry is quietly being made obsolete by AI companies selling outcomes instead of infrastructure. This is a field report from someone who was inside the infrastructure.

The numbers confirm the trajectory. The global voice AI agents market sat at $2.4 billion in 2024. It is projected to reach $47.5 billion by 2034 — a compound annual growth rate of 34.8%. In India specifically, the CPaaS market crossed $1.1 billion in 2025 and is on a 23–26% annual growth path, driven by BFSI, logistics, and healthcare embedding programmable voice into their core operations. Voice AI venture funding nearly septupled in two years, from roughly $315 million in 2022 to $2.1 billion in 2024. The money is following the conviction.

Voice AI agents market 2024

Projected by 2034

CAGR 2025–2034

India CPaaS market 2025

That conviction is showing up in financial results too. Twilio — the default telephony infrastructure for most AI voice stacks — reported Voice AI customers growing nearly 60% year on year in Q3 2025, with a 10x revenue increase from its top ten Voice AI startup customers. Conversation Relay call volume more than tripled quarter over quarter. The infrastructure layer is seeing real demand, not just funding announcements.

And yet beneath the growth numbers sits a different statistic that rarely makes the headline: 95% of generative AI pilots are either failing outright or severely underperforming expectations, according to MIT and McKinsey research from 2025. Fully autonomous AI voice agents in production — not demos, not pilots, but live production systems — accounted for less than 1% of contact centres as of 2024. The gap between the market narrative and the deployment reality is wide. It is not closing as fast as the funding rounds suggest.

That version of the story is true. It is also incomplete in a way that matters.

The part of the story that doesn’t get told — because most of the people telling it weren’t there — is what it actually took to build reliable telephony infrastructure in the first place. What the hard problems were. What was tried and abandoned before the models existed to make it work. And why the new generation of AI voice companies, building elegantly on top of OpenAI and Anthropic and Gemini, keep running into walls that feel new to them but are recognisable to anyone who spent time inside the infrastructure.

I spent time inside the infrastructure. This is what I saw.

The Problem Was Never Intelligence. It Was Reliability.

When I was at Exotel, the problem we were solving was not smart communication. It was reliable communication. Those are different problems, and conflating them leads you to build the wrong things.

India at that time — and in many ways still — was a country where physical infrastructure failed in ways that software-only companies never had to think about. Road construction could damage the cables connecting your servers to the internet. Not metaphorically. Literally — a JCB digging a trench for a new road would cut through a fibre line, and a region would go dark. Cellular networks had coverage gaps that weren’t on any official map. You found them when your calls started dropping and you couldn’t explain why from the software layer alone.

And then there were the events nobody planned for — when in 2015 the Chennai flood took down an entire data centre, it wasn’t a software incident. It was a reminder that the infrastructure underneath all the abstraction was still physical, still vulnerable, and still capable of going dark in ways no SLA had ever accounted for.

What I remember most from that period isn’t the outage. It’s what we built in response to it. During the floods, Exotel ran an initiative where people stranded and in need of rescue could simply make a call or send an SMS — no app, no internet, no smartphone required. That call would automatically trigger a tweet, putting their location and situation in front of people who could help. Telcos were overwhelmed. Formal rescue coordination was breaking down.

A small team had built something that worked on the most basic communication primitives available — a call, a text — and connected it to the one channel that was still moving information: social media. Vishnu Jayadevan and I built this in the middle of the crisis, with whatever we had.

It worked. People got rescued.

I think about that whenever someone tells me the telephony layer is a commodity to be abstracted away. It isn’t. At the moments that matter most, it is the only thing left.

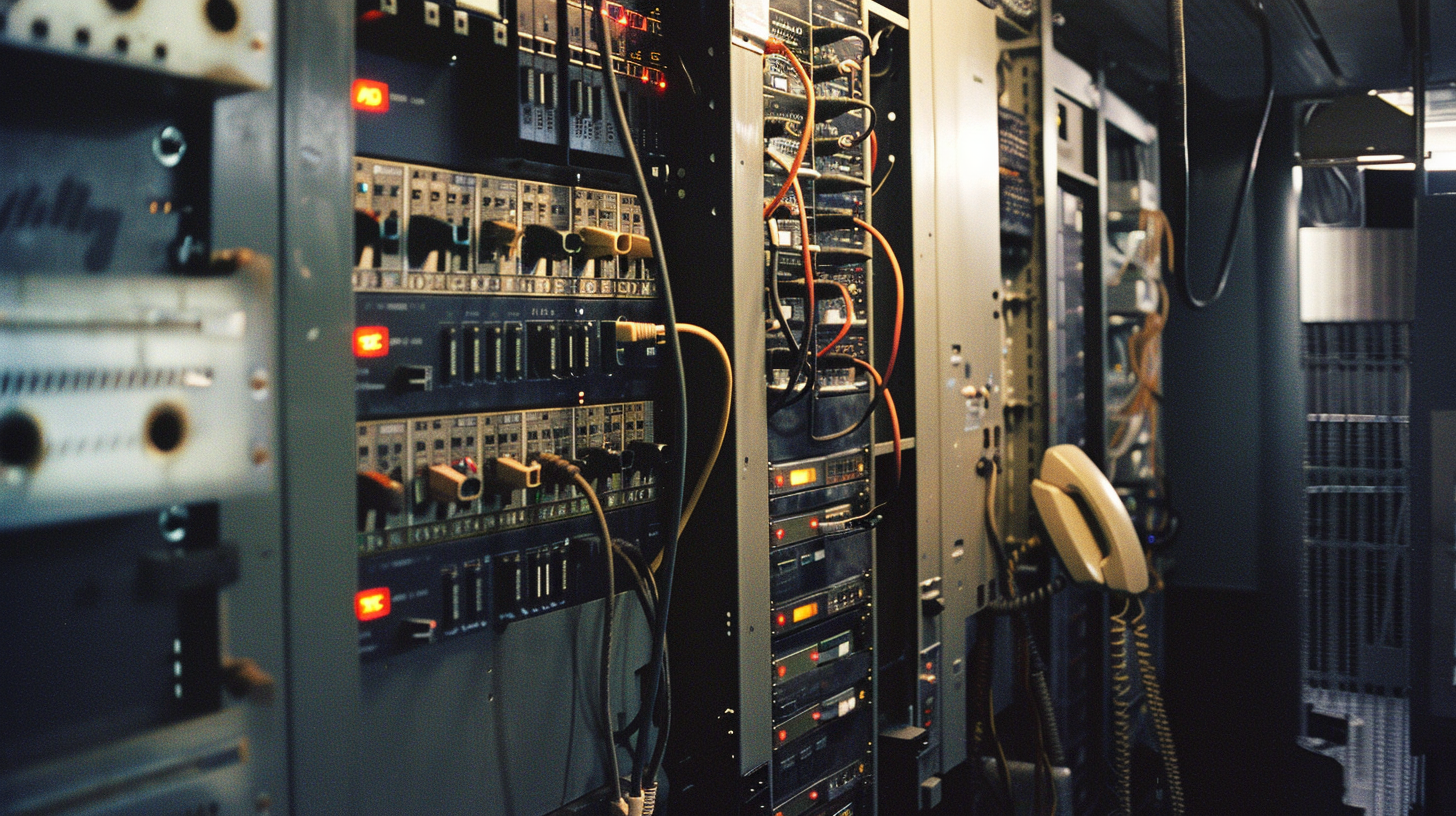

The architecture we were building lived at the intersection of software and physics. We were connecting cloud infrastructure to regional telephone servers — the bridge between the programmable internet and the physical telephone network. And that physical network had properties that software abstractions couldn’t hide.

Recording calls reliably and transferring them from local telephone servers to cloud sounds straightforward until you’re doing it at scale across a country with uneven connectivity, and you need to guarantee that no recording gets lost regardless of what the network does between the moment the call ends and the moment the file lands in storage. Routing calls through fallback servers when the primary internet link went down required building a real-time awareness of link health that most software observability tools weren’t designed for. The observability we needed was not “is the service up?” — it was “is this specific call, for this specific customer, routing correctly through this specific path, right now?”

We also built what every SaaS company builds — dashboards, reporting, multi-tenancy, the noisy neighbour problem. But those were table stakes. The problems that kept us up at night were physical. They had no Stack Overflow threads. You figured them out by reasoning from first principles about the nature of PRI lines and telephony routing, or you didn’t figure them out at all, and someone’s business stopped working.

PRI lines deserve a specific mention because they become important later. A PRI — Primary Rate Interface — is a physical telephone circuit. It carries a fixed number of simultaneous call channels. It is not software. You cannot autoscale it. You provision it from a telco, you pay for the capacity whether you use it or not, and when you run out of channels, calls fail. The only way to get more capacity is to order more physical circuits, which takes time and costs money regardless of actual utilisation.

At the scale Exotel was operating, this was a constant tension. A customer could launch a campaign — a mass outbound calling event — that would suddenly demand far more concurrent channels than their provisioned capacity. The noisy neighbour problem in telephony wasn’t just about CPU or memory; it was about finite physical circuits that multiple customers shared. Rate limiting wasn’t a product decision. It was an infrastructure survival mechanism.

A fair challenge to this framing is that most modern cloud telephony has moved away from PRI entirely. SIP trunking replaced it as the dominant protocol — more flexible, software-configurable, cheaper to provision. And that’s true. But SIP didn’t eliminate the physical constraint. It abstracted it one layer up. Somewhere beneath every SIP trunk, there is still a telco with physical infrastructure, capacity limits, and provisioning timelines that don’t bend to the demands of software. The abstraction is thinner than it looks. When you push it hard enough — when an AI agent campaign fires at a scale the telco wasn’t expecting — you find the ceiling again. It just has a different label on it.

Which brings up the dependency that almost nobody talks about when they pitch an AI-native telephony stack: the carrier relationship. Every voice call that touches the PSTN — which is most of them, because most of your customers are on phones, not WebRTC browser tabs — has to go through a telco at some point. That relationship is not a technical detail. It is a commercial and operational dependency that shapes what you can build, how fast you can scale, what your unit economics look like, and how much of your reliability is actually in your control. A new AI voice company can build an extraordinary intelligence layer and still find its growth capped by carrier capacity, routing agreements, or regulatory compliance obligations it didn’t know existed. The telco isn’t going away. The question for AI-native builders is whether they treat that dependency as a constraint to engineer around, or a layer to genuinely understand and work with.

Once the reliability layers were stable, we started moving up the stack. We rewrote latency-critical components in Go. We worked on noise reduction and call quality analysis. These were quality-of-service investments — making the pipe better, faster, cleaner. Good engineering work on a well-understood problem.

And alongside all of this, quietly, there were other conversations.

Approximate contribution of each stage to total round-trip time

The Graveyard of Correct Ideas

This is the part of the telephony story I think about most when I look at what’s being built today.

There were ideas that surfaced regularly in the years I was at Exotel. Ideas that felt right, that had obvious value, that smart engineers and product people kept coming back to — and that kept getting shelved. Not because they were wrong. Because the world wasn’t ready for them.

Automated sentiment analysis on calls was one of them. The problem it was trying to solve was real and significant. If you are a collections company running hundreds of thousands of calls per month, your agents are making constant judgments about customer sentiment. This customer sounds hostile — escalate. This one sounds cooperative but cash-constrained — offering a payment plan. This one sounds like they’re going to hang up in thirty seconds — get to the point. These are skilled, experience-dependent judgments, and they exist entirely in the agent’s head. The moment the call ends, the judgment is gone. What goes into the system is a call disposition: “Promised to pay” or “Not reachable” or “Callback requested.” The nuance — which is often the most valuable part — disappears.

The idea of automating that signal was correct. Capture sentiment continuously during the call. Surface it in real time to the agent and their supervisor. Feed it into the post-call workflow to determine next steps. Use it to train agents on where their calls go wrong. The value was obvious to anyone who spent time with collections or sales teams.

We did the research. We hit the wall. The models that existed at the time couldn’t do this reliably at scale. Accuracy was insufficient. The infrastructure cost to process audio in real time for millions of calls was not justified by output quality that was still too variable to trust. So the idea went back in the drawer.

Voice biometrics was another. The ability to authenticate a caller by their voice pattern rather than by a PIN or a password. The use cases were real: reducing friction in customer authentication, detecting repeat fraud attempts, identifying bot-generated calls before they consumed agent time. Shiva Shankar Arumugam, who worked with me at Exotel and participated in some of these initiatives, recalled these explorations when I spoke to him as part of this research — the limitations were well understood even then: spoofing vulnerabilities, regulatory questions, the quality of the models available at the time. It was shelved.

And then there was BotMandate. The idea was to take a standard operating procedure — the document that every call centre has, the one that every new agent gets trained on in their first week — and have the system build a workflow from it automatically. Give it the guidelines, let it construct the execution logic. The business gives the intent. The system figures out the structure.

I want to be precise about what BotMandate was trying to do, because it is easy to understate it. It was not trying to take a structured flowchart and convert it to a decision tree. It was trying to take a prose document describing how a business operates — the kind of document written by a human for humans — and derive from it a functional workflow that could be executed by the system. That is, in essence, exactly what the most sophisticated AI-native workflow and voice agent platforms are building today, with large language models doing the interpretation that rule engines couldn’t.

The idea was correct. The capability layer didn’t exist.

Some of these ideas are now being executed. Not as proof of concept — as products with real customers. Sentiment analysis at scale, which we shelved because the models weren’t accurate enough, is now running in production at contact centre intelligence platforms. The accuracy is there. The infrastructure cost is justified. The signal that used to live entirely in the agent’s head is now being captured, structured, and fed back into workflows in real time. SOP-to-workflow — the core ambition of BotMandate — exists in early form inside several AI agent platforms today, where a business can describe its process in plain language and have the system construct the execution logic. It is not perfect. The edge cases still surface. But the gap between what the system produces and what a human would design has narrowed to the point where it is useful in production. Voice biometrics remains the most nascent of the three — the spoofing problem is harder than it looks, and the regulatory questions in markets like India have not been resolved. But the trajectory is clear. The graveyard is being emptied. The question is not whether these ideas will be built. It is whether the people building them understand deeply enough why the previous attempts failed.

This changes how you should think about who is best positioned to build AI-native products in infrastructure-heavy segments. The instinct is to assume it’s the AI-first builder — someone who deeply understands the models, the architectures, the emerging tooling, and applies that understanding to a new domain. And sometimes that’s right. But in segments like telephony, where the problems are deeply physical and the history of failed attempts is long and instructive, the more credible builder is probably someone who already spent years understanding why the previous attempts failed. Not because they’re smarter, but because they already know which walls are real and which ones just look real from the outside.

Qualitative index — higher is better for quality, lower is better for complexity

The chart above tells the story that the market narrative misses. Voice quality and infrastructure complexity moved in opposite directions for years — but they did not move at the same speed. Quality improved gradually, then dramatically. Complexity reduced gradually, then stalled. The crossover at 2023 is not a finish line. It is the moment the intelligence layer finally caught up with the vision — and exposed how much the infrastructure layer still has to close.

What 2026 Actually Looks Like

The market has moved. The use cases that were theoretical when we were shelving sentiment analysis and BotMandate are now product categories with funded companies and real customers.

Sales outreach through AI agents. Collections automation that runs conversations without a human on one end. Customer support that handles the majority of inbound queries without escalation. The industries that built their operations on call volume — financial services, insurance, healthcare, e-commerce — are actively deploying AI in the telephony layer. The demand is real and it is accelerating.

Roughly 95% of what is being built right now is in the customer support segment. A customer reaches out. An AI agent handles it. No wait time. No transfer queue. Resolution, or escalation when needed. The business case is clear: companies using AI in customer service report 30–40% reductions in support costs and 60% faster resolution times. 43% of contact centres are already using AI to automate repetitive tasks. 46% have deployed real-time agent assist tools. The ROI is calculable, and the technology is good enough for a significant portion of interactions.

Using AI to automate tasks

of contact centres

Fully autonomous AI agents

in production (2024)

Cost reduction with AI

reported by adopters

AI pilots failing or underperforming

MIT & McKinsey, 2025

But the production reality is more sobering. Fully autonomous AI voice agents — not demos, not pilots, but live systems handling real customer calls — accounted for less than 1% of contact centres as of 2024.

The perception gap runs deeper than deployment numbers alone. Twilio’s 2025 Inside the Conversational AI Revolution report — surveying 4,800 consumers and 457 business leaders across 15 countries — found that 90% of business leaders believe their customers are satisfied with their conversational AI experience. Only 59% of consumers agree. A 31-point gap between what companies think they’ve built and what customers actually experience. That is not a technology problem. That is an assumption problem — and it lives in exactly the same place as the infrastructure gap: builders optimising for what they can measure, not for what customers feel.

The gap between adoption of AI features and deployment of AI-native systems is vast. And the reasons come down to infrastructure walls that keep getting rediscovered.

% deployments citing as top blocker

The walls are familiar to anyone who spent time inside the infrastructure. They just don’t look familiar to teams that came to telephony through AI rather than the other way around.

The concurrency problem, revisited.

The rate limiting we built at Exotel was not a product decision. It was a load-bearing piece of infrastructure designed to protect a shared physical resource — PRI line capacity — from being overwhelmed by a single customer’s campaign. It was carefully designed. It worked. It was the right solution to the problem it was solving.

AI agents break this design in a specific and non-obvious way.

A human agent handles one call at a time. They dial. They talk. The call ends. They disposition it. They dial again. The concurrency is naturally limited by human biology and attention. The infrastructure was designed around this reality.

An AI agent has no such limit. It can run hundreds of concurrent conversations. Thousands, if the infrastructure supports it. Which means the assumptions baked into the telephony layer — the rate limits, the capacity planning, the cost models, the monitoring systems — were designed for a world that no longer applies. The question is not how to lift the rate limit. The question is how to redesign the entire capacity model for a fundamentally different concurrency profile.

And then there is the PRI problem, which does not go away just because the software layer has become intelligent.

PRI lines are still physical circuits. They still cannot be autoscaled the way cloud compute can. An AI telephony company that can spin up thousands of concurrent agent conversations through elegant software architecture still needs the physical line capacity to route those calls through the telephone network. And the economics of that capacity — provisioned in advance, paid for whether used or not, ordered from telcos on timelines measured in weeks — have not changed with the arrival of large language models.

No company today has solved this end-to-end. The software layer has become dramatically more capable. The physical layer it depends on has not. Every AI-native telephony company is, somewhere in their architecture, managing this tension. Most of them discovered it after they had already built everything else.

The quality and control problem.

The new companies building on top of major model providers are, in many cases, building beautifully. The intelligence layer is genuinely impressive. Conversations are coherent, contextually aware, able to handle complexity that would have been impossible a few years ago.

What these companies often lack is granular control over the voice layer underneath the intelligence. Noise cancellation that can be tuned or disabled for specific use cases — because sometimes the noise reduction algorithm that works well for a quiet office call actively degrades audio quality for a call centre floor where the ambient noise contains useful signals. Latency controls that allow real-time feedback on audio quality and immediate adjustment. The ability to tune the nuances of voice processing for specific contexts without having to rebuild the entire stack.

These controls exist in mature telephony infrastructure because they were built by teams who spent years learning why they were necessary. They are not obvious to teams who arrived at telephony through AI. They are also not trivial to retrofit once you have a production system. The path toward solving the latency problem specifically runs through smaller, specialised models deployed at the edge — closer to the call, with lower inference overhead — rather than routing every conversation through a general-purpose LLM in a remote data centre. That direction is visible. It is not yet the default.

The missing mental models.

This is perhaps the subtlest gap, and the hardest to fix.

The people building AI voice products today are, in many cases, brilliant engineers and product thinkers. They understand machine learning, distributed systems, product design, and go-to-market. What they often don’t have is the accumulated intuition about telephony infrastructure that comes from years of operating inside it — understanding why certain architectural decisions were made, what failure modes they were protecting against, which constraints are genuinely physical and which are just accumulated habits masquerading as necessity.

This creates a specific pattern of mistakes. Not catastrophic mistakes — these are smart people building real products. But a recurring tendency to treat the telephony layer as a solved problem that can be abstracted away, when in reality it is a partially solved problem with hard constraints that have not changed. The abstraction breaks at the moments that matter most: high-scale campaigns, unexpected concurrency spikes, regional network failures, audio quality edge cases.

The most concrete illustration of this is the production stack that emerged as the de facto standard in 2025. Most AI voice deployments combined Twilio for telephony, ElevenLabs for text-to-speech, and either Vapi or Retell for orchestration — three separate companies, each solving one layer, stitched together at the seams. Each component is excellent at what it does. The integration points between them are where things fail. That is not an AI-native architecture. It is three pipes taped together. An AI-native telephony company does not assemble this stack. It redesigns the assumptions that made the stack necessary. This is precisely the problem Rapida AI is building toward — an open-source, end-to-end voice AI orchestration platform that brings LLMs, STT, TTS, telephony, noise cancellation, and observability into a single unified stack, so builders stop stitching and start building.

The India layer.

Building AI-native telephony in India is not the same problem as building it in the United States, and the difference is not just scale. India has twenty-two officially recognised languages and hundreds of dialects. An AI voice agent trained primarily in English — or even Hindi — will degrade sharply when it encounters a caller from Tamil Nadu speaking in a regional accent, or a farmer in Maharashtra switching mid-sentence between Marathi and Hindi as people naturally do. Speech recognition accuracy can drop fifteen to thirty percentage points when moving from standard to regional variants. For a collections call or a healthcare reminder, that degradation is not a UX inconvenience. It is a product failure. The multilingual problem in India is an order of magnitude harder than the accent diversity problem in the US, and most AI voice platforms that enter the Indian market underestimate it until they are already in production.

Then there is the regulatory layer. TRAI — the Telecom Regulatory Authority of India — governs what can be sent, to whom, at what time, and with what consent. The DND registry means a significant portion of your target calling list is legally unreachable for commercial calls. The consent framework for AI-initiated voice interactions is still evolving, and what is permissible today may not be permissible after the next regulatory update. These are not bureaucratic inconveniences. They are structural constraints that shape the product architecture from the ground up — what data you store, how you verify consent, what disclosures the AI agent has to make at the start of a call, and what your fallback looks like when a call is flagged as non-compliant. A company that builds its AI telephony stack without encoding these constraints at the infrastructure level will retrofit them later at significant cost. The India CPaaS numbers look compelling from the outside. The operating reality is more demanding than the market size suggests.

The full strategic implications of this layer — what it means for incumbents, for new entrants, and for the market opportunity — deserve more than a subsection. They are addressed in full below.

The India Opportunity Nobody Is Moving Fast Enough On

There is a market opportunity sitting in plain sight in India that most of the companies best positioned to capture it are not moving on fast enough. And most of the companies moving fast on it don’t have what it takes to actually capture it.

The global voice AI narrative is written in English. The benchmarks are in English. The model evaluations are in English. The case studies — the restaurant booking agents, the healthcare scheduling assistants, the outbound sales callers — are almost all in English or a small set of Western European languages with relatively similar phonetic structures and minimal code-switching.

India is not that market. And the difference is not a localisation problem. It is a fundamentally different technical and regulatory challenge that requires a ground-up approach, not a translation layer on top of a system designed for something else.

The language problem is harder than it looks from the outside

India has twenty-two officially recognised languages. It has hundreds of dialects. And it has a conversational behaviour that is entirely normal for its speakers and almost entirely absent from the training data of every major voice AI model: code-switching.

A collections agent calling a customer in Mumbai does not have a conversation in Hindi or in English. They have a conversation that moves between Hindi, English, and Marathi — sometimes within a single sentence. The customer who starts in formal Hindi switches to English when discussing financial terms, then drops into Marathi when expressing frustration. This is not an edge case. This is how people talk.

Current ASR models handle this poorly. The transcript degrades. The LLM receives a malformed input. The agent response becomes contextually inappropriate. The call fails — not with an error, but with a customer who feels unheard and an outcome that isn’t captured correctly.

The accuracy drop when moving from standard Indian English to regional language variants is not a rounding error. Studies have documented fifteen to thirty percentage point degradation. For a collections call or a healthcare reminder, this is not a UX inconvenience. A collections agent that mishears “I’ll pay next week” as “I won’t pay this week” and triggers an escalation workflow has not just failed technically. It has damaged a customer relationship and potentially created a compliance issue.

The multilingual problem in India is an order of magnitude harder than the accent diversity problem in the US. And solving it requires something that cannot be purchased off the shelf from OpenAI or Anthropic today: high-quality training data in Indian regional languages, acoustic models tuned for Indian speech patterns, and code-switching detection that understands the specific language pairs that appear in Indian conversations — not the generic multilingual capability that works reasonably well for Spanish-English switching in California.

The regulatory layer is structural, not bureaucratic

TRAI — the Telecom Regulatory Authority of India — is not a compliance checkbox. It is a structural constraint that shapes what an AI voice product can do, who it can call, when it can call them, and what it must say when it does.

The DND registry is the most immediate constraint. A significant portion of any consumer calling list in India is legally unreachable for commercial outbound calls. An AI voice platform that doesn’t encode DND compliance at the infrastructure level — not as a pre-call filter, but as an architectural guarantee — is not a platform that an Indian enterprise can responsibly deploy at scale.

The consent framework for AI-initiated voice interactions is actively evolving. What is permissible today under TRAI guidelines may not be permissible after the next regulatory update. An AI voice platform built without the flexibility to adapt its disclosure language, consent capture mechanism, and call recording handling to evolving TRAI requirements will face costly retrofitting — or worse, enterprise customers who pull deployments when a compliance gap is identified.

Then there is the specific requirement that an AI agent disclose itself as an AI at the start of a call. This is not unique to India — it is emerging as a regulatory norm globally. But the implementation in India has specific nuances: the disclosure must be in the language of the call, it must be audibly clear, and the customer must have a clear path to a human agent. Building this into a voice AI platform as an afterthought produces brittle, inconsistent implementations. Building it in from the ground up produces a compliance posture that is genuinely defensible.

The companies that understand these constraints at the architectural level — not as features to be added but as assumptions to be designed around — have a structural advantage over every global platform entering the Indian market. Twilio knows compliance. ElevenLabs is learning it. No global player has the accumulated institutional knowledge of how the Indian regulatory environment actually works in practice — the edge cases, the carrier-level enforcement patterns, the enterprise risk appetite — that an Indian incumbent has built over years of operating in this environment.

The infrastructure variation problem compounds everything

India is not one market. It is many markets with very different infrastructure profiles stacked on top of each other.

An AI voice call placed to a customer in South Mumbai, where 4G and 5G coverage is reliable and latency is low, operates in a fundamentally different environment from a call placed to a customer in a tier-3 town in Uttar Pradesh, where connectivity is variable, 2G fallback is common, and audio quality degrades in ways that stress every layer of the voice AI stack.

A collections company operating at scale in India is calling across all of these environments simultaneously. An AI voice platform that performs excellently in controlled conditions and degrades unpredictably in variable connectivity conditions is not a platform that works for India at scale. It is a platform that works for the demo and fails in the field.

This is not a new problem. It is the same problem that Exotel and its generation of cloud telephony companies spent years solving — building fallback routing, variable bitrate audio, real-time quality monitoring, and graceful degradation for exactly this infrastructure variation. The solution exists in the accumulated operational knowledge of companies that built telephony for India from the ground up.

The new AI voice platforms don’t have this. They will acquire it — either by building it slowly through production failures, or by partnering with or acquiring someone who already has it.

The market that unlocks when this is solved

The India CPaaS market at $1.1 billion in 2025 growing at 25% annually is a reasonable number for current spend. It understates the opportunity in a specific way: it captures what enterprises are currently paying for voice infrastructure. It does not capture the latent spend that becomes unlocked when multilingual AI voice actually works at production quality.

Consider collections alone. India’s collections industry runs almost entirely on voice. The volume of outbound calls made by BFSI companies, NBFCs, and collections agencies in India annually is staggering. Most of it is done by human agents working from script, at high cost, with inconsistent quality and significant compliance risk. The willingness to pay for AI automation that actually works — that handles regional languages, respects DND compliance, and produces verifiable outcomes — is not speculative. It is visible in every enterprise conversation happening in this space right now.

Add healthcare — appointment reminders, medication adherence calls, post-discharge follow-ups — all running at scale in regional languages across tier-2 and tier-3 markets. Add logistics, where driver coordination and delivery confirmation calls happen in hundreds of local languages. Add insurance, where policy servicing and renewal calls are a known cost centre that every insurer wants to automate.

The market that unlocks when the multilingual voice AI problem is genuinely solved in India is not the $1.1 billion CPaaS market. It is the total spend on human voice interaction across these industries — which is an order of magnitude larger.

The window and who closes it

This opportunity has a window. It is not indefinite.

Global platforms are investing in multilingual capability. The pace of model improvement means that the gap between what a global platform can do and what the Indian market needs is closing — slowly in 2025, faster in 2026 and 2027 as more Indian language data enters training pipelines and as global platforms make strategic acquisitions in the Indian market.

The companies best positioned to capture this window are Indian incumbents with existing carrier relationships, enterprise customer relationships in the relevant verticals, and the institutional knowledge of the regulatory environment. They have a 24 to 36 month advantage that is real but not permanent.

The risk is not that the opportunity disappears. The risk is that it gets captured by someone else — a well-funded Indian AI startup that acquires the carrier relationships it needs, or a global platform that acquires an Indian voice AI company to fast-track market entry.

The question for every Indian CPaaS CxO is not whether the multilingual AI voice opportunity in India is real. It is. The question is whether they move on it as a strategic bet — investing in multilingual model development, compliance architecture, and vertical-specific AI capabilities — or whether they wait to see how the market develops and find themselves retrofitting AI-native capabilities onto infrastructure that was never designed for them. The window is open. It does not stay open forever.

The Pricing Model Disruption Nobody Wants to Talk About

There is a conversation that is happening in every enterprise sales meeting in the voice AI space right now, and almost nobody in the CPaaS industry is having it publicly.

The conversation goes like this. A CxO at a collections company is being pitched by a new AI voice platform. The pitch is not about minutes. It is not about concurrent calls or call quality or IVR depth. The pitch is about recoveries. How many debts did the AI agent collect? What was the recovery rate? What was the cost per rupee recovered?

The enterprise buyer is thinking in outcomes. The CPaaS vendor is still selling infrastructure.

That gap — between how the new entrants are pricing their product and how the incumbents are pricing theirs — is not just a commercial problem. It is a signal that the two sides are operating with fundamentally different assumptions about what the product actually is.

How per-minute pricing was born and why it made sense

Per-minute pricing is not an arbitrary convention. It emerged directly from the underlying economics of telephony.

Telcos charge per minute because the physical resource being consumed — circuit capacity, switching infrastructure, network time — is time-bound. A two-minute call consumes twice the network resource of a one-minute call. The cost structure is linear with time. The pricing follows the cost structure.

CPaaS companies inherited this model and built on top of it. You provision a Twilio number, you get charged per minute for calls made and received, you pass that cost through to your customers with a margin. The value you are delivering is connectivity — the ability to make and receive calls programmatically. Connectivity is a time-bound resource. Per-minute pricing makes sense.

The model worked well for a decade because the value delivered by a call was roughly proportional to its length. A longer call meant more conversation. More conversation meant more opportunity to achieve the call’s purpose. The pricing proxy — time — was a reasonable approximation of value delivered.

What AI breaks in this model

An AI voice agent does not have a linear relationship between call length and value delivered.

A well-designed AI collections agent can determine within ninety seconds whether a customer is going to pay, negotiate a payment plan, confirm the details, and close the call. A poorly designed one can stay on the line for eight minutes achieving nothing. The per-minute model charges four times as much for the bad outcome as for the good one.

More fundamentally: the value delivered by an AI voice call is not connection time. It is the outcome achieved. A three-minute call that books an appointment has a specific, measurable value to a healthcare provider. A three-minute call that doesn’t book an appointment has a near-zero value — or negative value if it consumed a slot in a calling queue that a successful call could have used.

The enterprise buyer understands this intuitively. They are not buying minutes. They are buying appointments, recoveries, resolved tickets, qualified leads. They would like to pay for those outcomes. The per-minute model forces them to pay for the infrastructure whether the outcome materialises or not.

This is not a new complaint about CPaaS pricing. Enterprise customers have always known they were paying for infrastructure rather than outcomes. They tolerated it because there was no alternative — the outcome was delivered by a human agent whose cost was also not outcome-linked, and the CPaaS was just one cost line among many.

AI changes the tolerance level. When the agent is AI and the outcome is measurable and the attribution is clear — this call produced this result — the case for outcome-based pricing becomes much stronger, and the case for per-minute pricing becomes much weaker.

The new pricing models emerging at the edges

AI voice companies are experimenting with several alternatives to per-minute pricing, with varying degrees of commercial maturity.

Outcome-based pricing is the most ambitious. Charge per appointment booked, per debt collected above a threshold, per support ticket resolved without human escalation. The enterprise buyer pays only when value is delivered. The vendor takes on the risk of underperformance. Early deployments in the collections space in the US market have shown strong enterprise adoption — buyers are willing to pay a significant premium per outcome versus what they were paying per minute, because the risk transfer has real value.

The commercial challenge is that outcome-based pricing requires deep integration into the customer’s downstream systems to verify the outcome. Did the appointment actually get kept? Did the payment actually come through? Did the support ticket actually stay resolved? Measuring outcomes requires data access that per-minute vendors have never needed and that enterprise customers are not always comfortable providing.

Per-conversation pricing is a middle ground — charge a fixed fee per completed AI conversation regardless of length. This eliminates the perverse incentive of the per-minute model while avoiding the complexity of outcome measurement. It is simpler to operationalise and easier to forecast. It does not fully align incentives but it is a significant improvement over per-minute.

Hybrid models are emerging that combine a lower per-minute infrastructure charge with a per-outcome success fee. This lets the vendor recover infrastructure costs while participating in the upside of successful outcomes. It is a transitional model — more honest than pure per-minute, less aligned than pure outcome-based.

The threat to incumbents

The per-minute model is not going to collapse overnight. Enterprise contracts have inertia. Procurement processes are slow. The switching cost of moving a large voice operation from one infrastructure provider to another is real.

But the threat is structural and it compounds over time.

Every enterprise customer who moves to an AI voice platform with outcome-based pricing is a customer who is no longer thinking about their telephony spend in per-minute terms. Once that mental model shifts — once a CxO is measuring their voice AI spend in cost per recovery or cost per appointment rather than cost per minute — going back to per-minute pricing feels like a regression.

The new entrants understand this. They are not competing with Exotel on per-minute rates. They are competing on a different dimension entirely — one where Exotel’s per-minute pricing is not a competitor to be undercut but a legacy model to be made obsolete.

The incumbents who see this most clearly are the ones who are starting to reframe their own commercial conversations. Not “we are cheaper per minute than Twilio” but “we can deliver outcomes that the pure-play AI companies can’t because we have the carrier relationships, the compliance posture, and the India-specific language capabilities that make those outcomes achievable at scale.”

That is a different sales conversation. It requires a different pricing model to make it credible.

What the transition actually requires

Moving from per-minute to outcome-based pricing is not a pricing decision. It is a product, architecture, and commercial transformation.

It requires instrumentation — the ability to track what happens after a call ends, to verify that an appointment was kept or a payment was made, to attribute the outcome to the AI conversation with sufficient confidence to invoice on it.

It requires vertical depth — outcome pricing only works when you understand the outcome deeply enough to measure it. A generic voice platform cannot price on collections outcomes because it does not understand what a successful collections conversation looks like. A platform with deep BFSI experience and collections-specific models can.

It requires risk tolerance — outcome-based pricing means the vendor carries performance risk. If the AI agent underperforms, the revenue doesn’t materialise. This requires confidence in the product and financial resilience to absorb variability.

It requires a different sales motion — outcome-based pricing sells to a different buyer. Not the IT procurement team buying infrastructure, but the business unit leader buying results. The CxO of a collections company, not the VP of Telecom.

None of this is easy. The incumbents who attempt this transition will face internal resistance, commercial risk, and the operational complexity of building outcome measurement into platforms that were never designed for it.

The incumbents who don’t attempt it will find themselves selling infrastructure in a market that is increasingly buying outcomes. That is a survivable position in the short term and an untenable one in the medium term.

The window to lead this transition — rather than be forced into it — is the same window as the India multilingual opportunity. It is open now. It will not stay open indefinitely.

Where We Actually Are

The AI-native telephony opportunity is real. The technology has crossed a threshold that makes the use cases from the BotMandate era finally executable. Sentiment analysis that we shelved because the models weren’t good enough is now running in production at companies. Voice agents that can handle complex, multi-turn conversations without a script are being deployed at scale. The things we imagined and couldn’t build are being built.

But we are earlier than the current noise suggests.

The intelligence layer is ahead of the infrastructure layer. The new builders understand the models better than they understand the physical constraints the models sit on top of. The companies that will be genuinely AI-native in this segment — not AI-augmented, not AI-featured, but architecturally rebuilt around what AI actually enables — are the ones that take both layers seriously. The ones that don’t treat the telephony infrastructure as a commodity to be abstracted away, but as a set of real constraints that the AI-native product has to be designed around.

The PRI concurrency problem is not going away. The latency requirements for real-time voice AI are not loosening. The regional infrastructure variation that made building telephony hard in the Exotel era is not resolved. These are the physics of the problem. The models are extraordinary. The physics are what they are.

The market needs four things to close this gap. A platform that enables AI companies to handle telephony scaling without having to rediscover the physics themselves. Accessible frameworks and mental models that make the accumulated knowledge of telephony infrastructure legible to builders who didn’t grow up in it. Granular controls on voice quality with sensible defaults for teams that don’t have the expertise to tune from scratch. And a genuine reckoning with the India-specific layer — language diversity, regulatory constraints, and infrastructure variation — that most platforms discover only after they are already in production.

These are not AI problems. They are infrastructure problems that AI has made urgent.

What changes with AI is not the constraints. What changes is what you can build within them. The intelligence that was missing from the pipe is now available. The question is whether the pipe is ready for it. Andreessen Horowitz put it plainly in their January 2025 voice AI update: we are just now transitioning from the infrastructure to the application layer of AI voice. As models improve, voice becomes the wedge — not the product. The infrastructure chapter is closing. The application chapter hasn’t been written yet. That is both the honest state of the market and the clearest signal of where the real work lies.

In most cases, it isn’t yet.

That is not a pessimistic conclusion. It is an honest one — and honesty is more useful than optimistic when you are deciding where to build. The gap between where telephony infrastructure is today and where it needs to be for AI-native products to fully deliver is a real gap. It is also a specific, well-defined gap. The people who understand it — who have spent time inside the infrastructure and can see exactly what needs to change — are the ones best positioned to close it.

If I Were Building This From Scratch

If I were building a telephony company today with no legacy constraints — no existing customers, no inherited architecture, no accumulated habit — the first thing I would do is separate the things worth keeping from the things that only feel necessary because they have always been there.

What I would keep.

A deep respect for the physical layer. Not as a constraint to route around, but as a reality to design with. The PRI intuition — the understanding that somewhere beneath every abstraction there is a telco, a circuit, and a limit — stays relevant even after SIP replaced PRI as the dominant protocol. The ceiling moved. It did not disappear.

The obsession with observability at the call level, not the service level. Not “is the system up?” but “is this specific call, for this specific customer, producing the outcome it was supposed to produce, right now?” That granularity is hard to build and easy to skip. It is also the thing that separates platforms that work in demos from platforms that work in production.

The institutional knowledge of what breaks at scale. Every failure mode that Exotel or its generation hit — the noisy neighbour problem, the campaign overload, the regional network outage, the carrier dispute — is a wall that every new entrant will eventually hit too. The companies that have already hit these walls and rebuilt around them have a knowledge asset that cannot be purchased and can barely be documented. It lives in the people who were there.

What I would throw out.

The assumption that a call is a discrete event — a thing that starts, happens, and ends, and whose value is measured in minutes.

This is the assumption that shaped every data model, every API, every reporting layer, every pricing structure in the legacy telephony world. It was a reasonable assumption when the call was the product. It is the wrong assumption when the outcome is the product.

An AI-native telephony system does not manage calls. It manages context. The call is one moment in a continuous relationship between a business and a customer — a moment that the system understands, learns from, and acts on, before and after the audio starts and stops. The system knows what happened on the last three calls. It knows what was promised. It knows what the customer’s tone suggested even when their words said something different.

The call is not the product. What the call produces — the understanding, the commitment, the signal — is the product. That distinction changes everything downstream. The data model changes. The integration points change. The pricing model changes. The metric you optimise for changes. Everything that was built to manage calls as discrete events has to be rethought for a system that manages context as a continuous stream.

Most of what exists today is built on the old assumption. That is the gap. That is also the opportunity.

The Question This Piece Hasn’t Answered

There is a question that runs underneath everything in this post that I have not addressed directly. Not because I forgot it. Because the honest answer is incomplete and I am not comfortable pretending otherwise.

When AI handles the call — when the collections agent, the outbound sales rep, the customer support person is replaced by a system that does their job better, faster, and at a fraction of the cost — what happens to them?

The optimistic version is familiar. Humans move up the stack. The agent becomes a supervisor. The supervisor becomes a trainer. The trainer becomes a designer of the systems that replaced them. Each displacement creates a higher-order role. The workforce adapts. New jobs emerge that we cannot yet name.

That transition is real. I have seen it in organisations that have deployed AI in their voice operations thoughtfully, with investment in retraining and a genuine commitment to the people affected. It is not a fiction.

But it is not universal. And the timeline is shorter than most policy discussions acknowledge.

The more honest version is that a significant number of people whose livelihoods depend on call volume — the collections agent in Pune, the outbound sales rep in Hyderabad, the customer support person in Chennai — are going to find that livelihood compressed. Not eliminated overnight. Compressed gradually, then suddenly, in the way that these things always happen. The optimistic narrative about moving up the stack assumes that every displaced worker has the access, the support, and the economic runway to make that transition. Many do not.

I raise this not to resolve it. I can’t. No single post can, and anyone who tells you they have a clean answer to technological displacement at scale is selling something.

I raise it because a piece about AI-native telephony that describes the infrastructure problems, the market opportunity, the pricing disruption, and the competitive dynamics — without naming what happens to the people who currently do this work — is a piece that is only telling half the story.

The half that is easier to tell. The half that the people writing about AI tend to tell.

The vision has existed for years. The engine is finally here. The infrastructure is catching up.

We are earlier than everyone thinks. But we are asking the right questions.

And the right questions include this one.

This is the first post in a series on AI-native thinking across software segments — telephony, workflow automation, dev tools, CRM, and more. Each post is built from direct experience and conversations with people who were inside these systems. A consolidated piece follows once the series is complete.

Next in the series: workflow automation — and the ceiling that trigger-action logic always hits.

If you’ve built in the telephony or voice AI space and have a perspective on where the infrastructure gaps are sharpest — I’d like to hear from you.